Ray tracing is still the crucial object in graphics, and Nvidia is after new ways to achieve more frames through tracing. The latest development comes from a research paper spotted by 0x22h, where Nvidia and academic collaborators detail GPU Subwarp Interleaving.

Of course, nothing’s ever that simple in graphics card space. To make Subwarp Interleaving work, Nvidia would need to bake it into the microarchitecture. Your current RTX 30-series won’t get fast ray tracing, no matter how many “Game Ready” drivers you install, as it’s future-silicon stuff.

Why Ray Tracing Trips Up GPUs

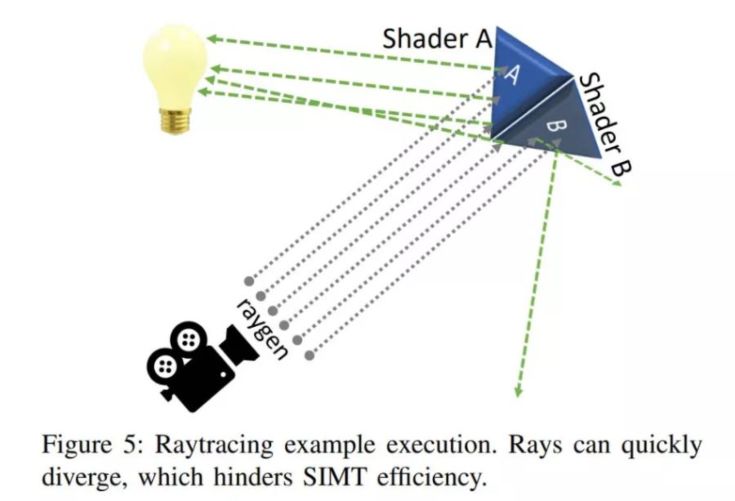

In the SIMT (single instruction, multiple threads) model, Nvidia cards use a single program counter to issue instructions to a bundle of threads called a warp. When one warp stalls on memory or branching, the scheduler hides the delay by swapping in another warp.

Ray tracing, though, is a scheduling nightmare. Rays bounce around unpredictably, workloads spread in different directions, and the warps aren’t properly aligned anymore. The result is warp divergence and warp starvation when the scheduler runs out of useful work to do. When you can’t hide latency with another warp, performance tanks.

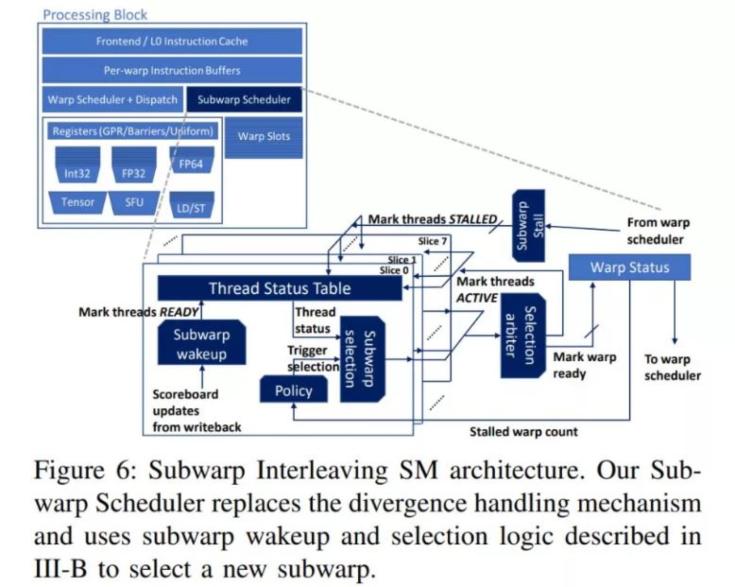

So instead of waiting for an entire warp, split it into smaller subwarps and let the scheduler interleave them.

The researchers, including GIT professor Sana Damani and Nvidia engineers like Ram Rangan and Stephen W. Keckler, tested the idea on a modified Turing-like GPU. The results weren’t academic rounding errors, across a suite of ray tracing workloads, performance improved by an average of 6.3%, with the best case almost 20%. For real-time graphics, that’s the difference between playable and very smooth.

But this feature doesn’t automatically unlock on RTX 3080 via a driver update. Subwarp Interleaving demands changes in the architecture. NVIDIA would need to redesign future cards with the scheduler integrated in the shipped units. So the earliest it’ll likely happen will be in the next-gen family like Lovelace, Hopper, or whatever codename Team Green declares.

That’s how GPU R&D usually works. You see a research paper here, a SIGGRAPH demo there, and then two or three years later, it appears in shipping silicon under some marketing-friendly label like “RTX Ray Accelerator 2.0.”

NVIDIA is obsessed with ray tracing, mostly because the tech is still a massive resource hog. Even with the fastest cards available, real-time light simulation remains a massive challenge. The strategy is the same: throw hardware at the problem, then fix the performance hit with software. First, they added RT cores, then they leaned on DLSS to fake the resolution. Now they’re digging into the microarchitecture with experiments like “Subwarp Interleaving,” basically a way to keep the GPU from idling while it waits for complex rays to finish calculating.

Even if this particular idea doesn’t ship exactly as is, it’s part of a long-term strategy that’s to keep iterating on the architectural bottlenecks that make ray tracing so resource hungry. AMD has been catching up with its RDNA2 implementation, but Nvidia wants to stay a step ahead, and these microarchitecture-level optimizations are how they plan to do it.